Introduction and Examples

DEFINITION: A matrix is defined as an ordered rectangular array of numbers. They can be used to represent systems of linear equations, as will be explained below.

Here are a example

Matrix Addition and Subtraction

DEFINITION: Two matrices A and B can be added or subtracted if and only if their dimensions are the same (i.e. both matrices have the same number of rows and columns. Take:

Addition

If A and B above are matrices of the same type then the sum is found by adding the corresponding elements aij + bij .

Here is an example of adding A and B together.

Subtraction

If A and B are matrices of the same type then the subtraction is found by subtracting the corresponding elements aij − bij.

Here is an example of subtracting matrices.

Matrix Multiplication

DEFINITION: When the number of columns of the first matrix is the same as the number of rows in the second matrix then matrix multiplication can be performed.

Here is an example of matrix multiplication for two 2×2 matrices.

Here is an example of matrix multiplication for two 3×3 matrices.

Note: That A×B is not the same as B×A

Transpose of Matrices

DEFINITION: The transpose of a matrix is found by exchanging rows for columns i.e. Matrix A = (aij) and the transpose of A is:

AT = (aji) where j is the column number and i is the row number of matrix A.

For example, the transpose of a matrix would be:

In the case of a square matrix (m = n), the transpose can be used to check if a matrix is symmetric. For a symmetric matrix A = AT.

The Determinant of a Matrix

DEFINITION: Determinants play an important role in finding the inverse of a matrix and also in solving systems of linear equations. In the following we assume we have a square matrix (m = n). The determinant of a matrix A will be denoted by det(A) or |A|. Firstly the determinant of a 2×2 and 3×3 matrix will be introduced, then the n×n case will be shown.

Determinant of a 2×2 matrix

Assuming A is an arbitrary 2×2 matrix A, where the elements are given by:

then the determinant of a this matrix is as follows:

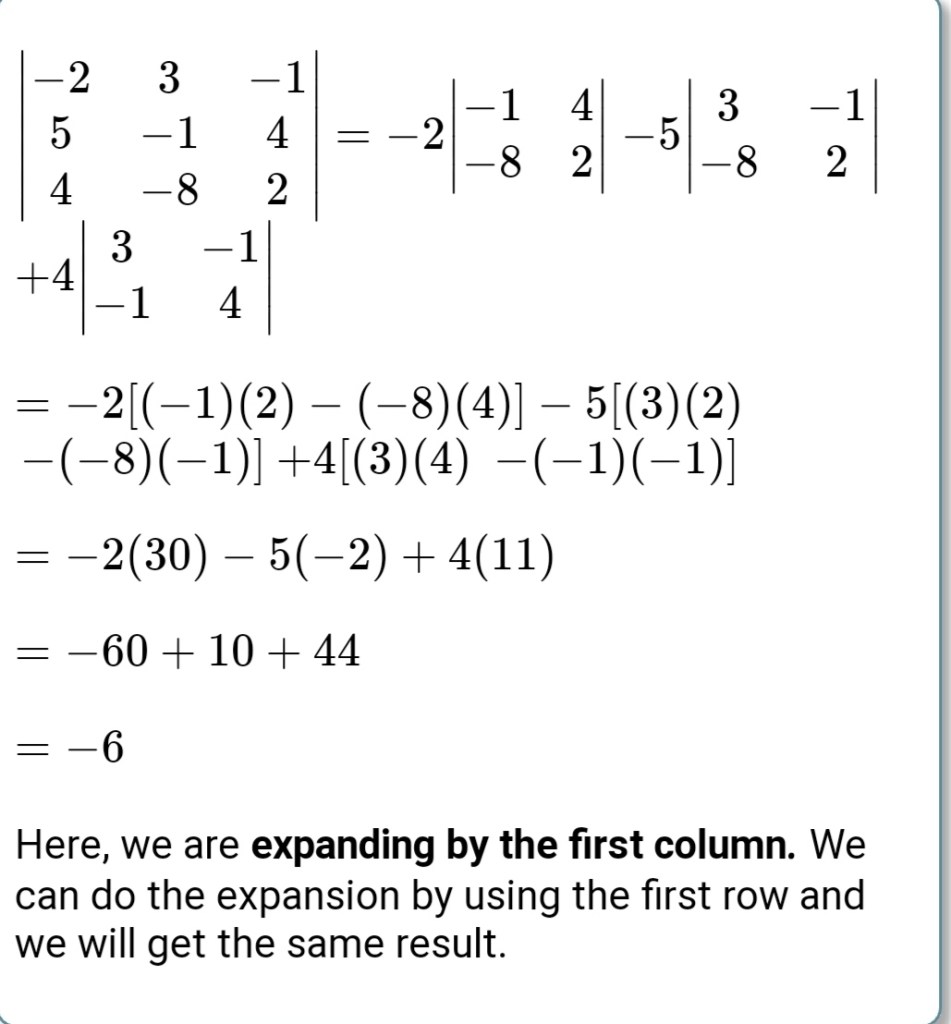

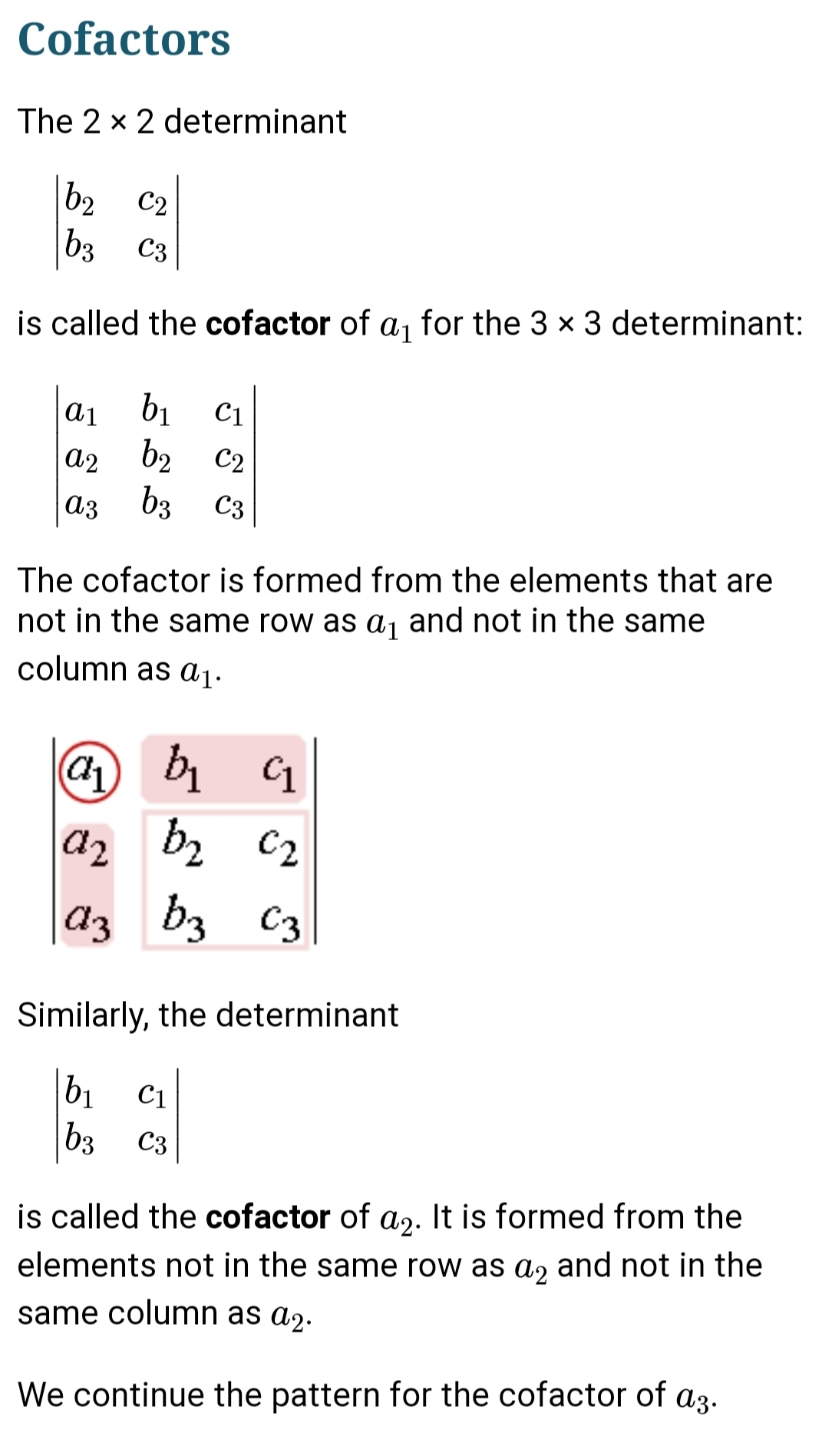

Determinant of a 3×3 matrix

The determinant of a 3×3 matrix is a little more tricky and is found as follows (for this case assume A is an arbitrary 3×3 matrix A, where the elements are given below).

then the determinant of a this matrix is as follows:

The Inverse of a Matrix

DEFINITION: Assuming we have a square matrix A, which is non-singular (i.e. det(A) does not equal zero), then there exists an n×n matrix A-1 which is called the inverse of A, such that this property holds:

AA-1 = A-1A = I, where I is the identity matrix.

The inverse of a 2×2 matrix

Take for example a arbitury 2×2 Matrix A whose determinant (ad − bc) is not equal to zero.

where a,b,c,d are numbers, The inverse is:

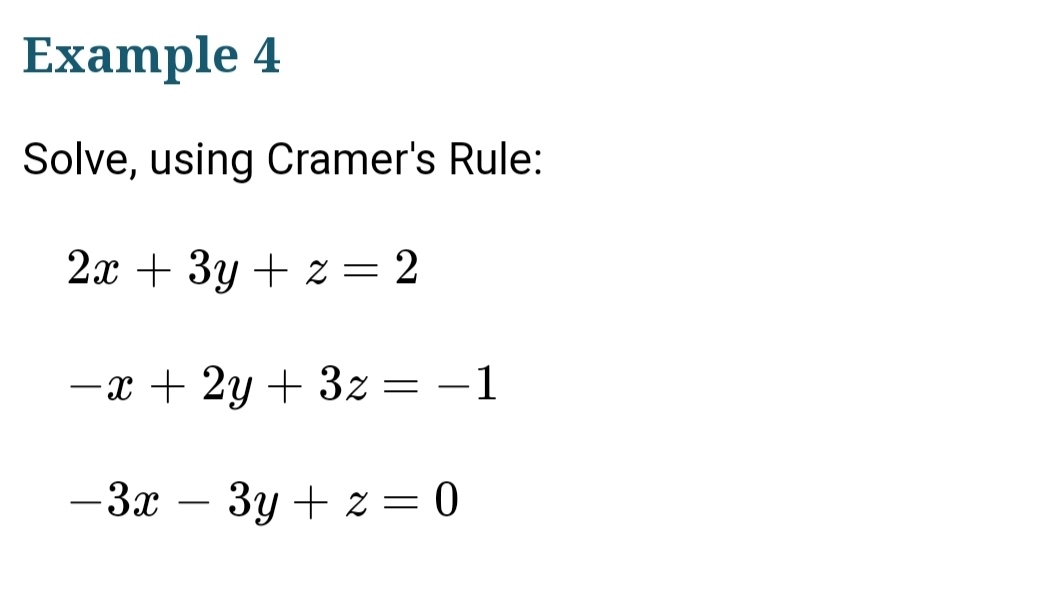

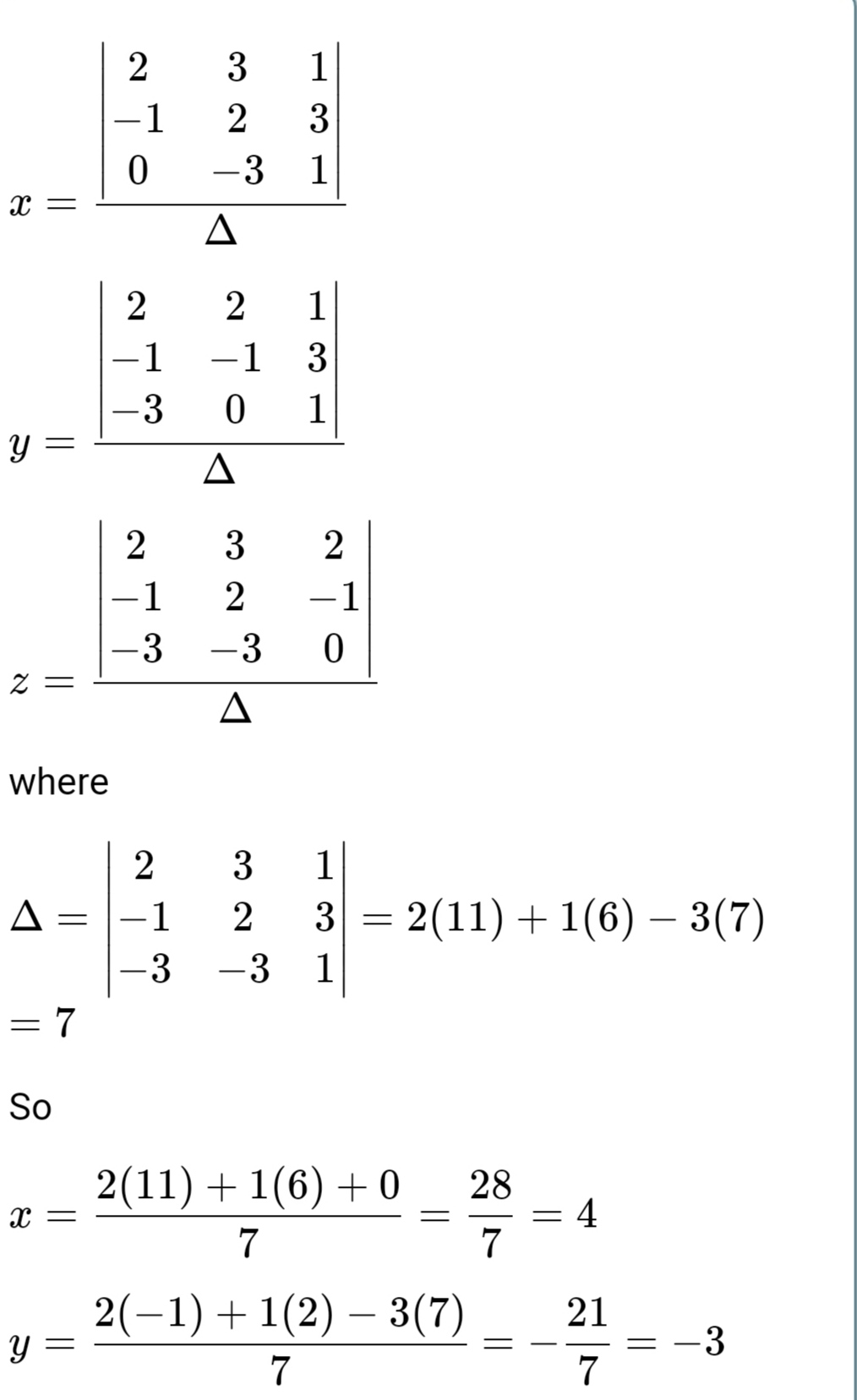

Inverse Matrix Method

DEFINITION: The inverse matrix method uses the inverse of a matrix to help solve a system of equations, such like the above Ax = b. By pre-multiplying both sides of this equation by A-1 gives:

or alternatively

So by calculating the inverse of the matrix and multiplying this by the vector b we can find the solution to the system of equations directly. And from earlier we found that the inverse is given by

From the above it is clear that the existence of a solution depends on the value of the determinant of A. There are three cases:

- If the det(A) does not equal zero then solutions exist using

- If the det(A) is zero and b=0 then the solution will be not be unique or does not exist.

- If the det(A) is zero and b=0 then the solution can be x = 0 but as with 2. is not unique or does not exist.

Looking at two equations we might have that

Written in matrix form would look like

and by rearranging we would get that the solution would look like

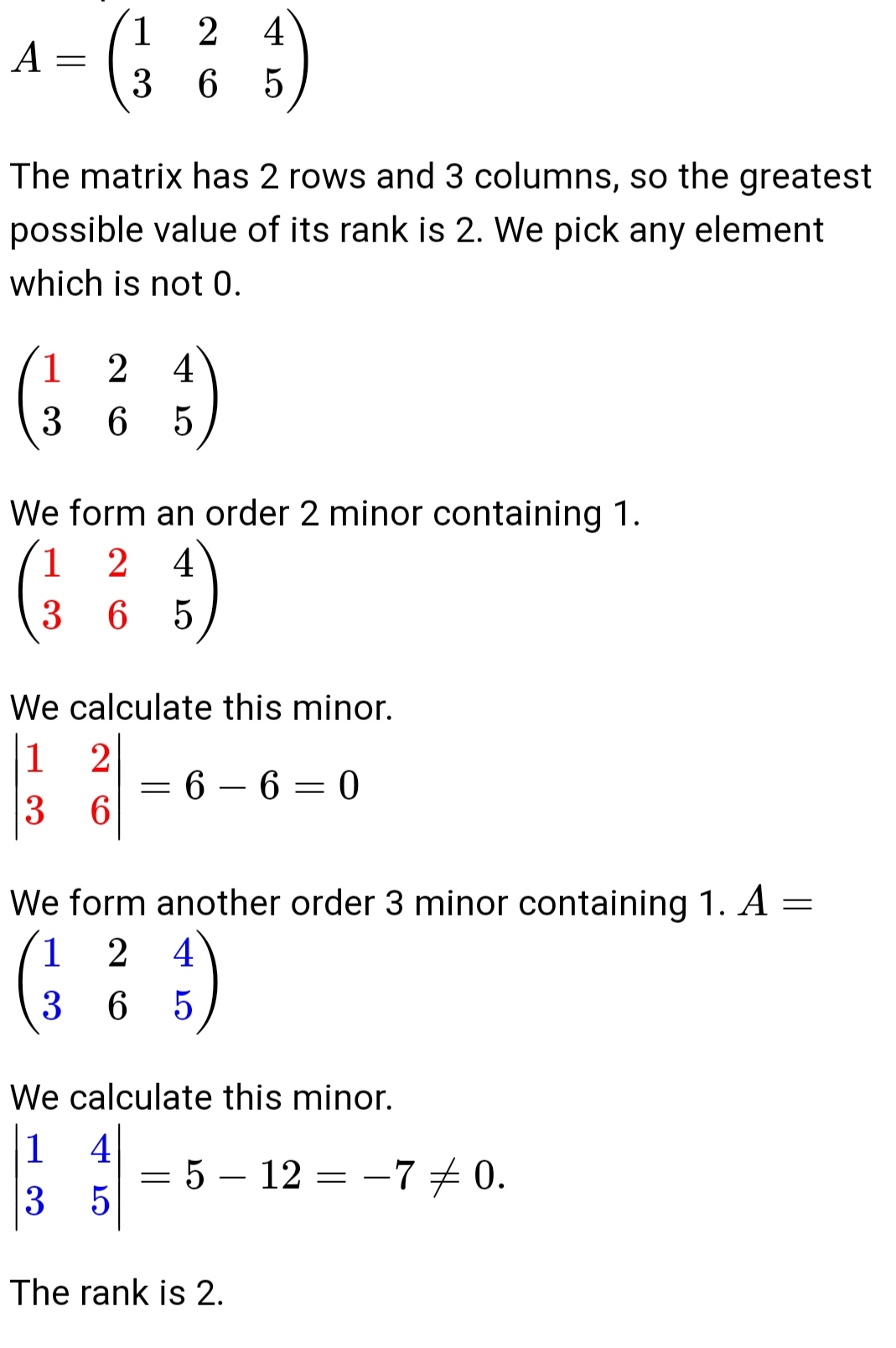

Rank of a Matrix

The rank of a matrix with m rows and n columns is a number r with the following properties:

- r is less than or equal to the smallest number out of m and n.

- r is equal to the order of the greatest minor of the matrix which is not 0.

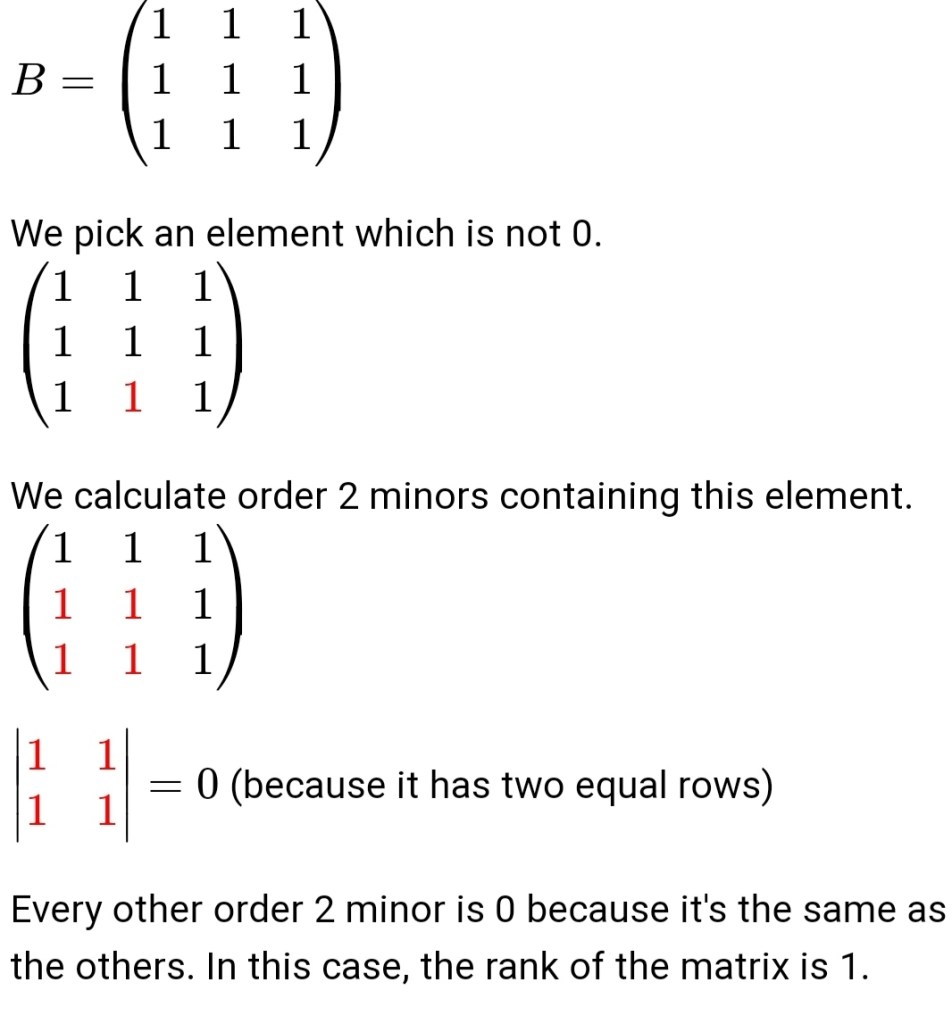

Determining the Rank of a Matrix

- We pick an element of the matrix which is not 0.

- We calculate the order 2 minors which contain that element until we find a minor which is not 0.

- If every order 2 minor is 0, then the rank of the matrix is 1.

- If there is any order 2 minor which is not 0, we calculate the order 3 minors which contain the previous minor until we find one which is not 0.

- If every order 3 minor is 0, then the rank of the matrix is 2.

- If there is any order 3 minor which is not 0, we calculate the order 4 minors until we find one which is not 0.

- We keep doing this until we get minors of an order equal to the smallest number out of the number of rows and the number of columns.

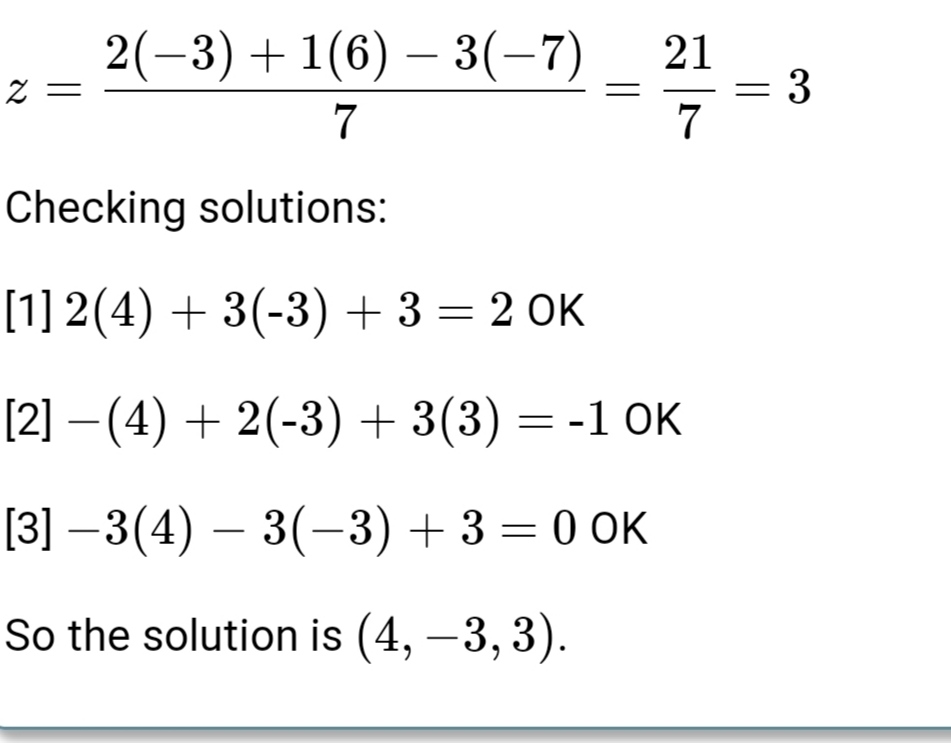

Example

Eigen Values and Eigen Vectors

Eigen vector of a matrix A is a vector represented by a matrix X such that when X is multiplied with matrix A, then the direction of the resultant matrix remains same as vector X.

Mathematically, above statement can be represented as:

AX = λX

where A is any arbitrary matrix, λ are eigen values and X is an eigen vector corresponding to each eigen value.

Here, we can see that AX is parallel to X. So, X is an eigen vector.

Method to find eigen vectors and eigen values of any square matrix A

We know that,

AX = λX

=> AX – λX = 0

=> (A – λI) X = 0 …..(1)

Above condition will be true only if (A – λI) is singular. That means,

|A – λI| = 0 …..(2)

(2) is known as characteristic equation of the matrix.

null

The roots of the characteristic equation are the eigen values of the matrix A.

Now, to find the eigen vectors, we simply put each eigen value into (1) and solve it by Gaussian elimination, that is, convert the augmented matrix (A – λI) = 0 to row echelon form and solve the linear system of equations thus obtained.

Some important properties of eigen values

- Eigen values of real symmetric and hermitian matrices are real

- Eigen values of real skew symmetric and skew hermitian matrices are either pure imaginary or zero

- Eigen values of unitary and orthogonal matrices are of unit modulus |λ| = 1

- If λ1, λ2…….λn are the eigen values of A, then kλ1, kλ2…….kλn are eigen values of kA

- If λ1, λ2…….λn are the eigen values of A, then 1/λ1, 1/λ2…….1/λn are eigen values of A-1

- If λ1, λ2…….λn are the eigen values of A, then λ1k, λ2k…….λnk are eigen values of Ak

null - Eigen values of A = Eigen Values of AT (Transpose)

- Sum of Eigen Values = Trace of A (Sum of diagonal elements of A)

- Product of Eigen Values = |A|

- Maximum number of distinct eigen values of A = Size of A

- If A and B are two matrices of same order then, Eigen values of AB = Eigen values of BA

Note –Eigenvalues and eigenvectors are only for square matrices.

Eigenvectors are by definition nonzero. Eigenvalues may be equal to zero.